From Screens to Systems—Multimodal HMI and the Rise of AR HUD

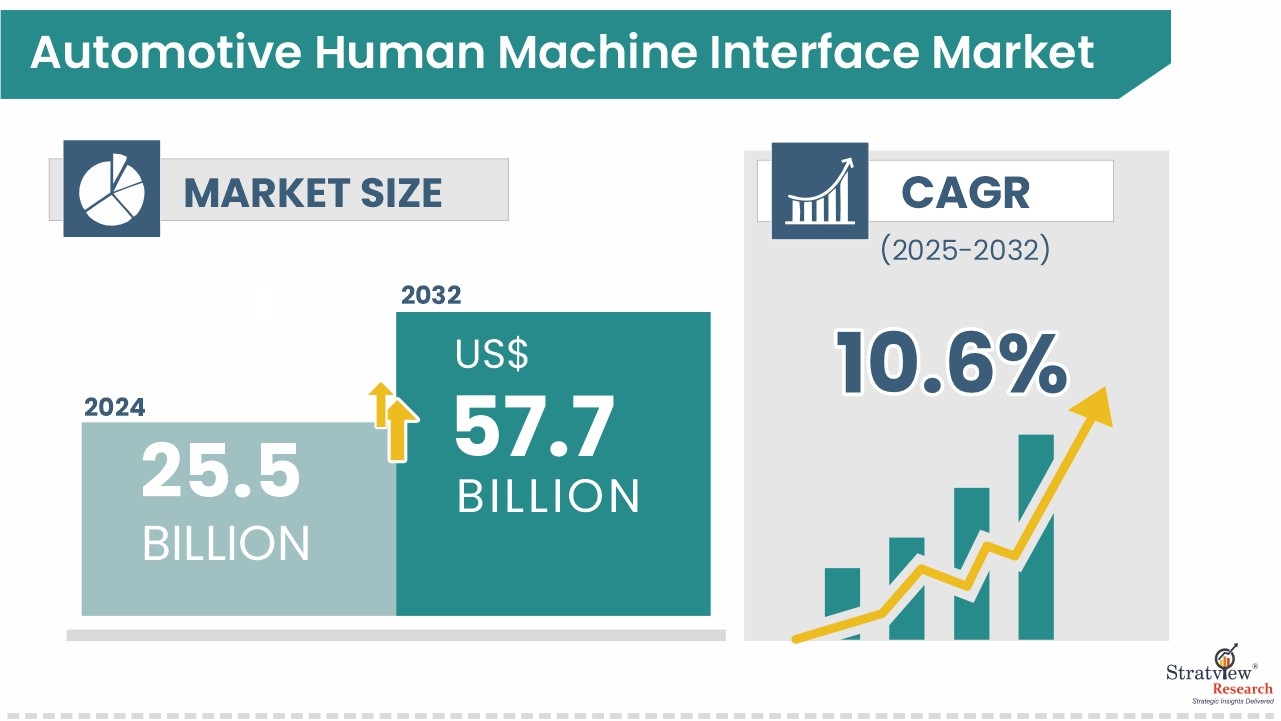

As vehicles become rolling computers, HMI defines how drivers perceive intelligence, safety, and brand character. The automotive human-machine interface market expansion—from USD 25.5 billion in 2024 to USD 57.7 billion by 2032 (10.6% CAGR)—reflects a broad transition to software-defined, connected cockpits that integrate voice, touch, and projected information.

Download the sample report here:

https://stratviewresearch.com/Request-Sample/639/automotive-human-machine-interface-market.html#form

Drivers

AI-first interaction is reshaping expectations. NLP-based voice control now handles natural speech, accents, and context, while ML tunes menus and suggestions to driving patterns. Beyond convenience, these systems lift safety by keeping eyes on the road and hands on the wheel. Stratview cites AI/ML—including NLP and gesture control—as core drivers of HMI adoption.

The second structural driver is access diversity. Standard HMI remains prevalent, but the fastest growth belongs to multimodal systems that blend visual (display/cluster/HUD), acoustic (voice/tones), and other cues. This reduces task time and error rates under varied conditions—key for ADAS handovers and complex navigation scenarios.

Third, value migrates downmarket. With mid-priced passenger cars expected to dominate, suppliers must deliver premium feel at scale—crisper displays, responsive touch/rotary inputs, timely voice feedback, and coherent UI conventions across trims and regions.

Trends

Visual remains king, but composition is changing. The visual interface category is the largest by technology, yet layouts are evolving from single monoliths to distributed displays (cluster + center + passenger) unified by consistent UI logic.

AR-inflected HUDs are the breakout. HUD is projected as the fastest-growing product, moving from luxury to broader lineups. As ADAS stacks mature, HUDs become the natural surface for lane guidance, speed/limit blending, hazard prompts, and navigation turn-by-turn—minimizing glance time and cognitive load.

Voice regains trust through better NLP. Fewer “please repeat” moments and richer intent handling make voice a true primary interface for navigation, calls, media, climate, and simple vehicle settings—especially useful in markets with complex scripts or when touch input is impractical.

Geography matters. Asia-Pacific is expected to stay the largest regional market, reflecting rapid EV adoption, high smartphone penetration (driving expectations for UI smoothness), and dense electronics ecosystems that compress cost curves.

Ecosystem consolidation continues. Tier-1s (Continental, Visteon, Valeo, Harman) collaborate with software houses (Luxoft, Altran) and component specialists (Synaptics, Alpine, Magneti Marelli) to deliver integrated hardware-software platforms with OTA support—shortening time-to-feature across global programs.

Conclusion

The next wave of automotive HMI is system-level: multimodal by default, visual-led but voice-competent, and primed for AR HUD prominence as ADAS deepens. With mid-priced vehicles driving scale and APAC leading demand, success hinges on consistent UX across screens, robust voice pipelines, safe information hierarchy, and platform architectures that support continuous updates. Stratview’s forecast to USD 57.7 billion by 2032 underscores ample runway for suppliers who can merge elegant design with reliable, upgradeable engineering.

- Questions and Answers

- Opinion

- Motivational and Inspiring Story

- Technology

- True & Inspiring Quotes

- Live and Let live

- Focus

- Geopolitics

- Military-Arms/Equipment

- Art

- Causes

- Crafts

- Dance

- Drinks

- Film/Movie

- Fitness

- Food

- Jeux

- Gardening

- Health

- Domicile

- Literature

- Music

- Networking

- Autre

- Party

- Religion

- Shopping

- Sports

- Theater

- Wellness

- News

- Culture